Skill Memory vs. Weight Updates: A Small Win for Memory, a Bigger Bottleneck Underneath

We compared three ways to help a tool-using AI agent improve over time: saving reusable skills, applying MinT-backed weight updates, or doing both. In this run, the simple memory-only path did best at 65.0% final success versus a 62.5% frozen baseline, but every method hit the same deeper bottleneck: a ticket-update schema the agent family never truly learned.

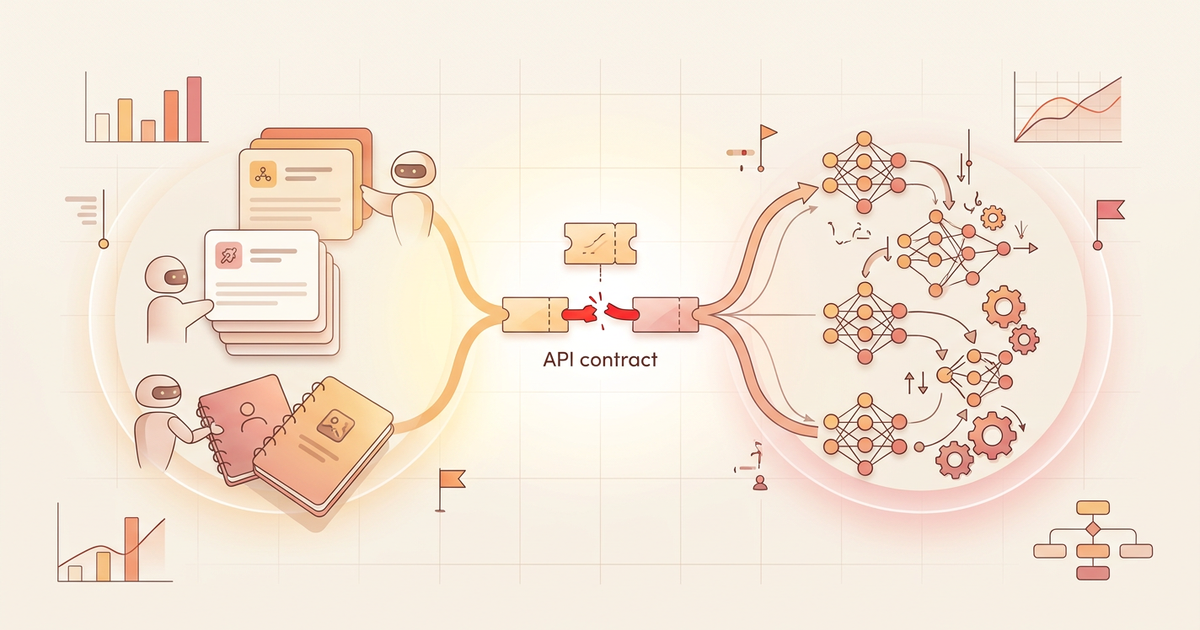

On March 17, 2026 (UTC), we closed a MinT-backed continual-learning study with a very simple question:

If a tool-using AI agent needs to improve over time, is it better to save reusable skills, update the model's weights, or do both?

The short answer is: in this run, simple skill memory came out best - but only by a hair, and it still did not solve the deeper problem.

At the final delayed checkpoint, the best memory-only setup reached 0.650 held-out success, compared with a frozen baseline at 0.625. The two weight-writing paths both finished at 0.600. Across the full delayed curve, memory-only also had the strongest observed AULC at 0.6344.

That sounds like a win. But it is a limited one.

Every scored condition - baseline included - kept crashing into the same stubborn bottleneck: a ticket-editing tool contract that the agent family never truly learned. In the cleanest audit slice, 0 of 45 Phase-3 task-row units passed.

The result, in one glance

| Condition | Final delayed success | Observed delayed AULC | C40 runtime lower bound | What it says |

|---|---|---|---|---|

| Frozen baseline | 0.625 | 0.6250 | 0.000s | A solid floor the adaptive methods had to beat |

| Skill memory only | 0.650 | 0.6344 | 968.861s | Best executed path, but only modestly better |

| Weight updates only | 0.600 | 0.5594 | 2147.155s | More expensive, less reliable in this run |

| Skill memory + weight updates | 0.600 | 0.5781 | 1376.478s | Extra complexity did not buy a stronger finish |

Why this matters

People often talk about AI agents improving over time as if there are only two moods available: either the model keeps learning and gets smarter, or it stays frozen and falls behind.

This study is more useful than that.

It shows that three different stories can all be true at once:

- a lightweight memory layer can be the best engineering choice,

- heavier online weight updates can cost more without producing a better result, and

- neither approach matters much if the agent keeps misunderstanding a critical tool contract.

That makes this a story about where adaptation helps, where it does not, and why a small gain is not the same thing as a solved problem.

What was tested

The primary benchmark in this run was a local continual tool-use sandbox:

- 40 training tasks across 4 phases,

- 20 held-out evaluation tasks,

- 4 tools for search, file reads, file writes, and ticket-state updates.

We compared four conditions under the same held-out harness:

- Frozen baseline: no persistent cross-episode adaptation.

- Skill memory only: the base model stayed frozen, but reusable skills were saved and carried forward.

- Weight updates only: MinT-backed LoRA updates changed the model state over time.

- Skill memory + weight updates: both mechanisms were available together.

The main reporting schedule was delayed: after 10, 20, 30, and 40 training tasks, each condition was evaluated on the same held-out set.

What actually won

The headline result is narrow but clear:

In this study, saving reusable skills was the best executed adaptive path.

That win matters for two reasons.

First, memory-only finished on top:

- 0.650 final success for skill memory,

- 0.625 for the frozen baseline,

- 0.600 for weight updates only,

- 0.600 for the hybrid path.

Second, it got there more cheaply than the fully weight-writing alternatives:

- memory-only C40 runtime lower bound: 968.861s,

- weight-updates-only: 2147.155s,

- hybrid: 1376.478s.

So the strongest practical lesson is not that memory is magical. It is that the simpler adaptation path gave the best executed return on complexity in this benchmark.

Why this was not a bigger win

The most important result in the whole run is not the 0.650 score. It is the failure audit underneath it.

Across the scored Phase-3 ticket-mutation slice:

- 0 / 45 task-row units passed,

- 44 / 45 had a malformed first mutation,

- 0 / 45 used the correct nested update schema.

In plain language, the agent often seemed to know what kind of change it wanted to make, but not how to say it in the exact API dialect the tool expected.

That matters because it changes the interpretation of the whole benchmark.

This is not mainly a story about online adaptation failing in the abstract. It is a story about a persistent tool-contract mismatch setting the ceiling for everybody.

If that bottleneck stays in place, then extra RL machinery mostly spends more effort inside a system that is still bumping into the same wall.

A surprise about update timing

One reason to like delayed updates is that they can, in theory, stabilize learning and reduce forgetting.

But the executed checkpoint-20 scheduler comparison pointed the other way.

At that boundary:

- delayed

rl_only= 0.650, while immediaterl_only= 0.675, - delayed

skills+rl= 0.600, while immediateskills+rl= 0.625.

That does not prove immediate updates are always better. It does show that, in this run, the cleaner early win belonged to the immediate schedule rather than the MetaClaw-inspired delayed one.

What a visitor should take away

If you only remember four things from this article, make them these:

- Skill memory was the best executed adaptive method. It beat the frozen baseline, but only slightly.

- Weight updates did not earn their extra complexity here. The weight-writing paths were slower and did not finish higher.

- The real ceiling came from a tool-contract bottleneck. The agent family kept sending the wrong ticket-update shape.

- This is a useful negative result. It tells us where to simplify, where to debug, and what not to overclaim.

What this means for future agent design

This run points toward a practical order of operations for teams building tool-using agents:

- start with a lightweight memory layer before reaching for continual RL,

- verify that critical tool contracts are actually being internalized,

- only scale online weight updates after the failure mode is mechanistically understood.

That is a more disciplined lesson than "never update weights" and more honest than "hybrid will eventually win if you wait long enough."

Limits of the result

This write-up should be read carefully.

- It is a single benchmarked run, not a universal law about all agents.

- The strongest positive margin is small: memory-only beats baseline by only +0.025 at the final delayed checkpoint.

- The delayed checkpoint-10 adaptive rows are still single-seed anchors, even though the full reporting grid is now closed.

- The study says more about tool-learning bottlenecks in this environment than about the outer limits of MinT or MetaClaw-style continual training.

Final read

The fairest summary is this:

In this live-agent study, storing skills beat updating weights - but only modestly, and none of the adaptive methods solved the broken ticket-mutation bottleneck underneath.

That makes the result more valuable, not less. It keeps the story honest. It tells visitors where the genuine signal is. And it points the next round of research toward the real blocker instead of toward a bigger training bill.